As data ecosystems grow, so does the complexity of managing them. What starts as a few automated scripts running on a schedule quickly spirals into a tangled web of dependencies. When a pipeline breaks, finding the root cause becomes a time-consuming forensic exercise, and silent failures can corrupt business dashboards before anyone notices.

To bring order, visibility, and reliability to our data infrastructure, our engineering team recently implemented a centralized orchestration layer using Apache Airflow. By treating our data pipelines as code, we transformed a fragile system into a robust, automated engine.

Here is a look at how we architected this solution.

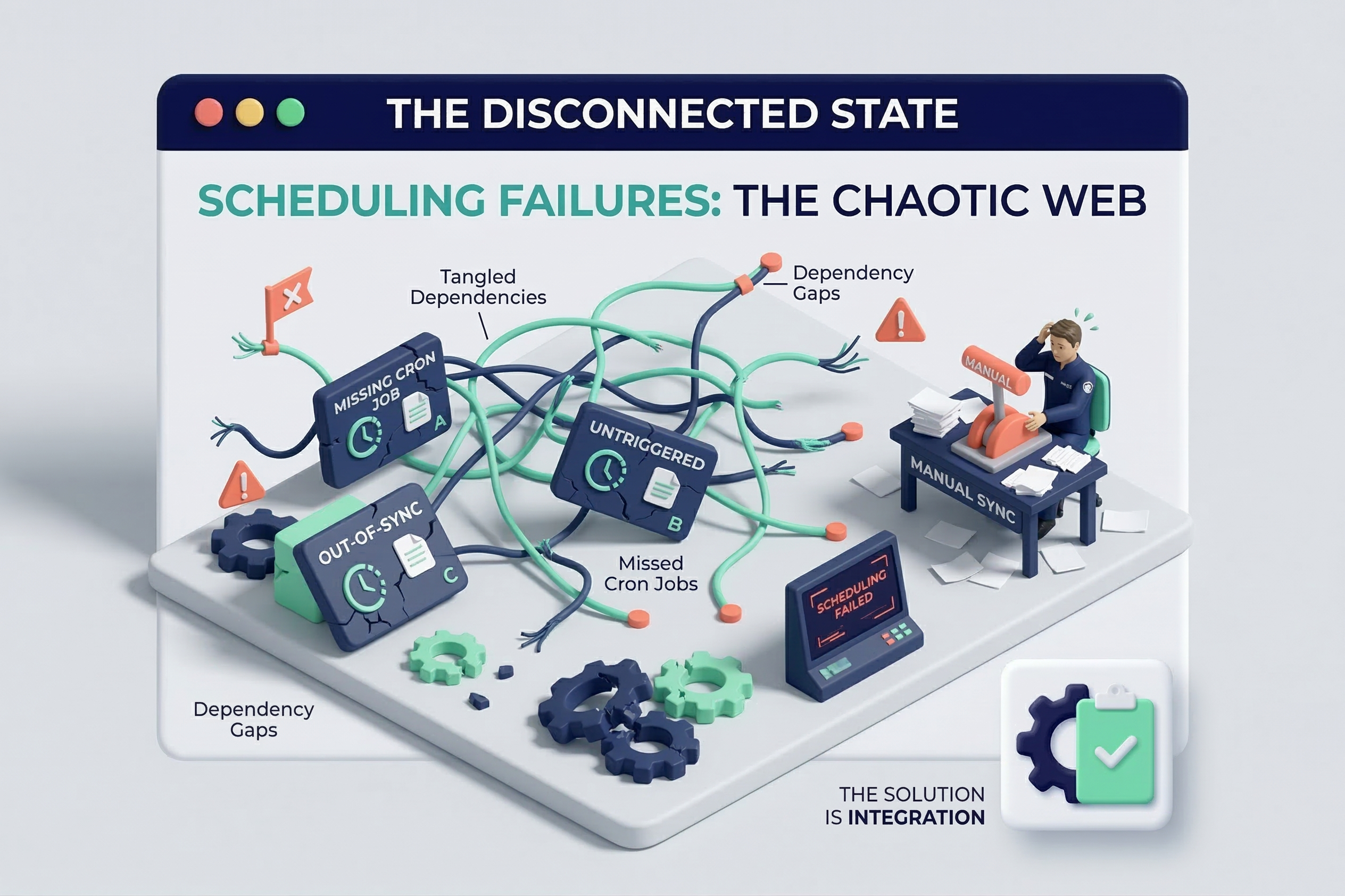

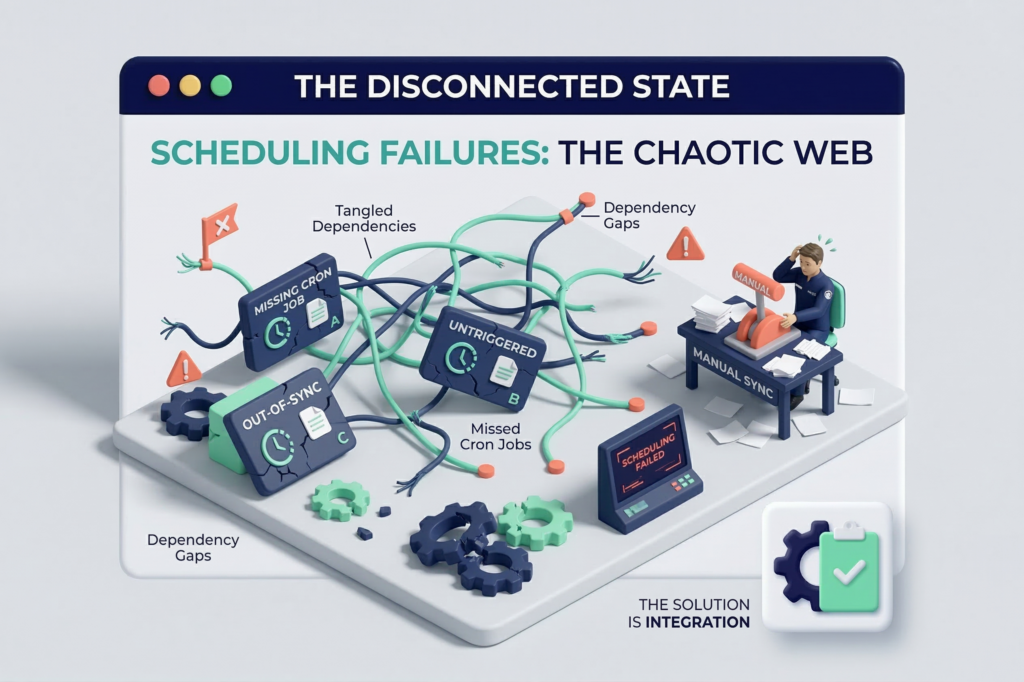

The Challenge: “Cron Job” Chaos

Prior to this project, data processes were scheduled independently. Extraction scripts, data warehouse loads, and transformation models were running on disconnected schedules without any awareness of each other.

The primary pain points included:

- Blind Spots: When an upstream data pull failed, downstream transformations still ran, resulting in inaccurate reporting and wasted compute resources.

- Dependency Hell: Managing the execution order of tasks across different tools required complex, custom workarounds.

- Lack of Alerting: Engineers had to manually check logs to ensure daily runs were successful, leading to delayed responses when errors inevitably occurred.

Our Solution: Centralized Orchestration as Code

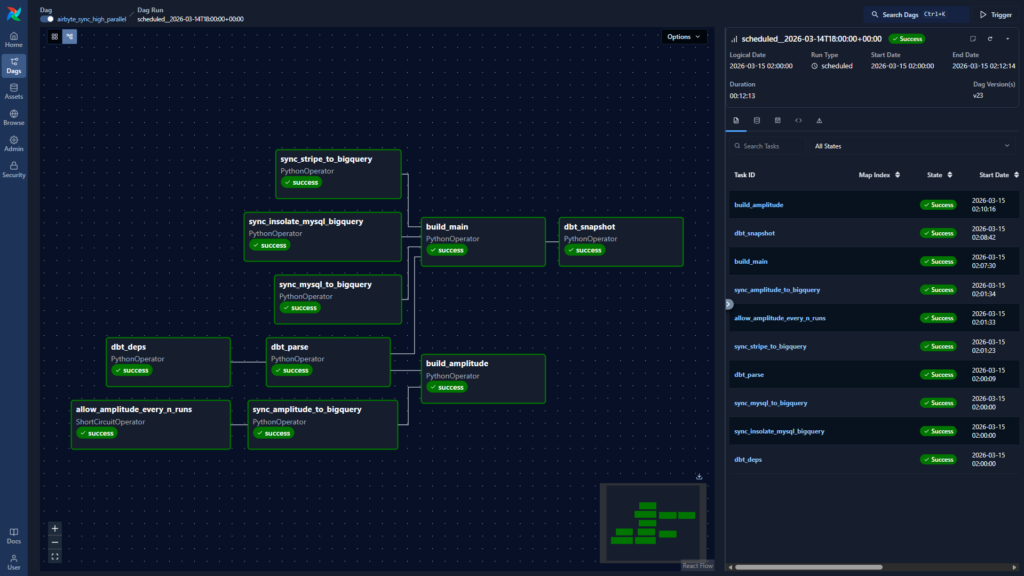

We needed a tool that could act as the “control room” for our entire data stack. We chose Apache Airflow because of its Python-based flexibility, dynamic DAG (Directed Acyclic Graph) generation, and rich ecosystem of operators that integrate seamlessly with our existing infrastructure (including modern tools like dbt, Docker, and cloud data warehouses).

The Architecture

Our team deployed a highly available Airflow environment to manage the end-to-end data lifecycle.

- Pipeline as Code: We authored all workflows as Python DAGs. This allowed us to version control our pipelines, conduct peer reviews, and deploy changes through a standard CI/CD process.

- Dependency Management: We configured precise upstream and downstream dependencies. Now, a data transformation step only triggers if the extraction step prior to it completes successfully.

- Containerized Execution: To avoid dependency conflicts between different tasks, we utilized Airflow to trigger containerized workloads. Whether running a Python script or triggering a complex machine learning model, the environment remains isolated and predictable.

- Integrated Ecosystem: Airflow was configured to orchestrate everything from our ingestion tools to our transformation layer, tying disparate systems together into a unified workflow.

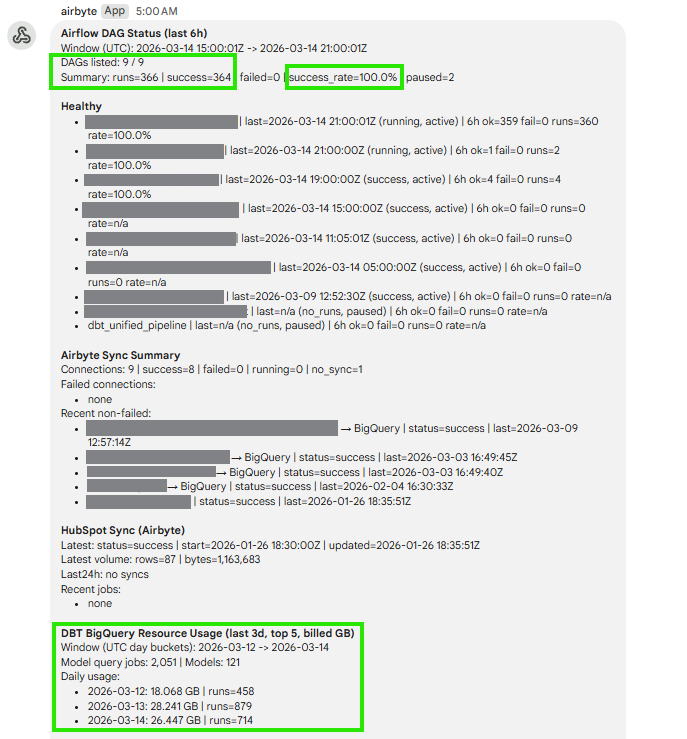

The Results: Reliability and Peace of Mind

By migrating our data workflows to Airflow, we drastically reduced operational overhead and increased confidence in our data products.

Key project outcomes:

- Proactive Alerting: We implemented Slack and email integrations. Now, the engineering team is instantly notified the moment a task fails, complete with direct links to the exact error logs.

- Automated Retries: Temporary API glitches or network timeouts no longer wake up engineers in the middle of the night; Airflow automatically retries failed tasks based on custom parameters.

- Complete Observability: The Airflow UI provides business and technical stakeholders with a real-time, visual overview of pipeline health and execution history.

Looking Forward

A modern data platform is only as reliable as the system orchestrating it. With Apache Airflow in place, our infrastructure is now scalable, resilient, and ready to handle exponentially more data without breaking a sweat.

If your data team is spending more time fixing broken pipelines than building new data products, it might be time to rethink your orchestration strategy. Reach out to us today to see how we can bring reliability to your data stack.