While massive, generalized Large Language Models (LLMs) dominate the market, they introduce high latency, token costs, and strict data privacy concerns for enterprise deployments. To address this, we engineered a custom, lightweight Natural Language Processing (NLP) engine from scratch. Built with Python, Keras, and TensorFlow, this specialized Chatbot utilizes Recurrent Neural Networks (RNNs) to deliver highly accurate, domain-specific customer service in both English and Spanish.

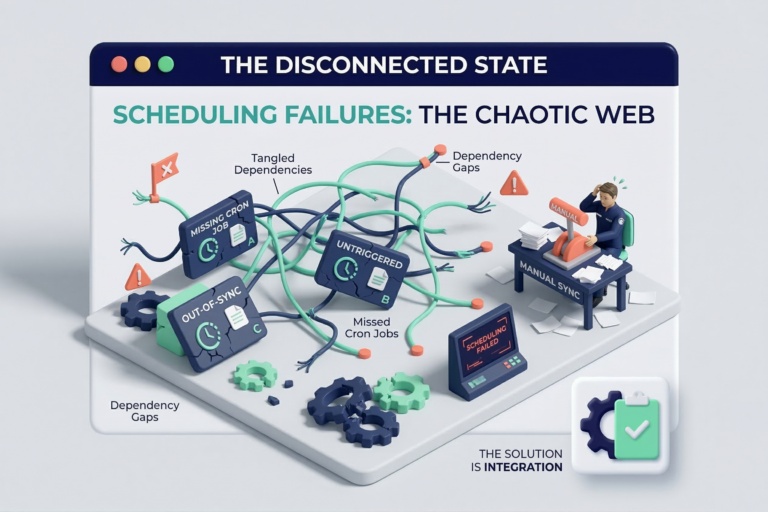

The Problem: The Overhead of Generalized AI

When a technology retailer needs to automate customer support, using a generic AI model is often overkill. These models are prone to hallucinations and require massive computational overhead to process simple, repetitive domain queries. Furthermore, mixing multiple languages in a single small-scale model often leads to context-bleeding and degraded response accuracy. The challenge was to build a system that is computationally efficient, strictly bounded to the company’s knowledge base, and capable of flawless bilingual interaction.

The Solution: Dual-LSTM Architectural Design

Instead of forcing one model to learn two languages, we implemented a decoupled architecture. The system intelligently detects the input language and routes the query to a dedicated, language-specific neural network.

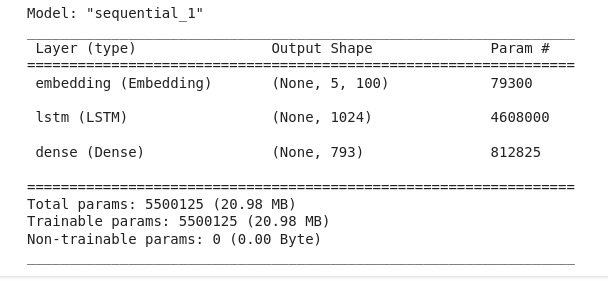

- Long Short-Term Memory (LSTM) Architecture: We designed the core predictive engine using LSTM layers, which excel at understanding sequential data and retaining conversational context. As shown in the architectural summary below, the model utilizes a deep Embedding layer followed by a 1024-unit LSTM core, resulting in a highly optimized parameter space (~5.4M parameters) capable of running on edge servers or local infrastructure.

- Custom Tokenization & NLP Pipeline: We built a complete data preprocessing pipeline from the ground up. The system ingests raw customer service transcripts, tokenizes the phrases, converts them into integer sequences, and applies One-Hot Encoding to the outputs.

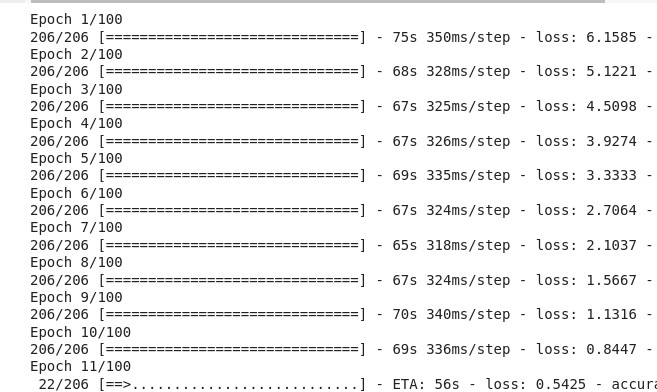

- Model Training & Loss Optimization: We compiled the model using categorical crossentropy and the Adam optimizer. As illustrated in the training logs below, the network was trained over 100 epochs, demonstrating a sharp, stable decrease in categorical loss (dropping from 6.15 to sub-1.0 levels) without experiencing gradient vanishing.

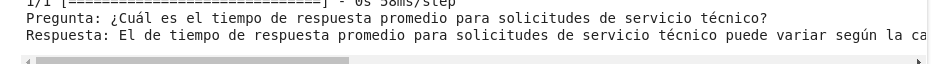

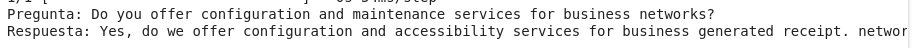

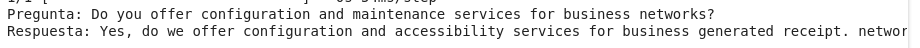

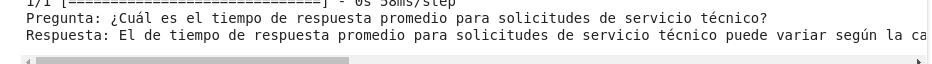

- Bilingual Inference & Prediction: During inference, the model analyzes the context size (the array of preceding words) to predict the highest-probability subsequent token. The results are highly deterministic and contextually accurate. As seen in the live prediction outputs below, the engine seamlessly handles complex technical inquiries in both English and Spanish, delivering coherent, domain-accurate responses.

The Impact

This project proves that effective AI automation does not always require massive, third-party APIs. By engineering a custom, dual-LSTM architecture, we delivered a privacy-first, zero-hallucination customer service engine. It drastically reduces computational overhead while maintaining strict control over the corporate knowledge base and user data.

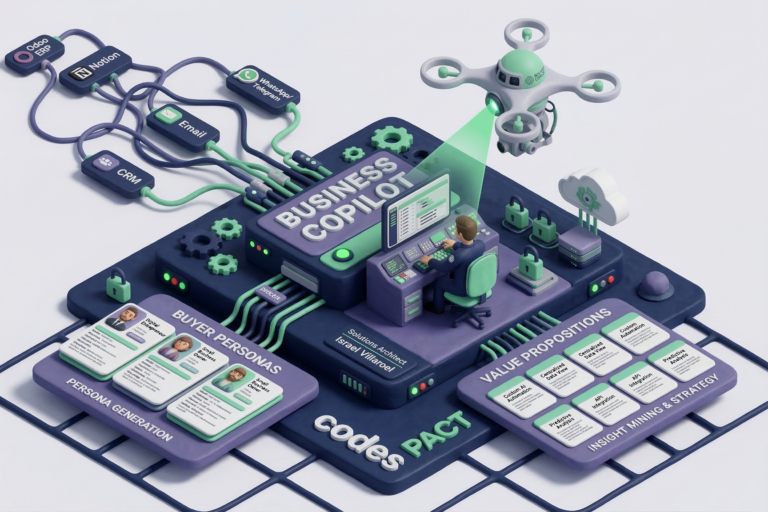

The Architecture Behind the Build Complex integrations require a clear vision. The underlying architecture and core development of Custom NLP Engine were spearheaded by our Solutions Architect, Israel Villaroel, ensuring the system wasn’t just intelligent, but built to scale and deploy seamlessly into real-world enterprise environments.