Every growing business eventually hits the same technical bottleneck: data silos. When your marketing metrics live in one platform, your transactional data in another, and your core application data in a fragmented database, gaining a holistic view of the company’s performance becomes a slow, manual nightmare.

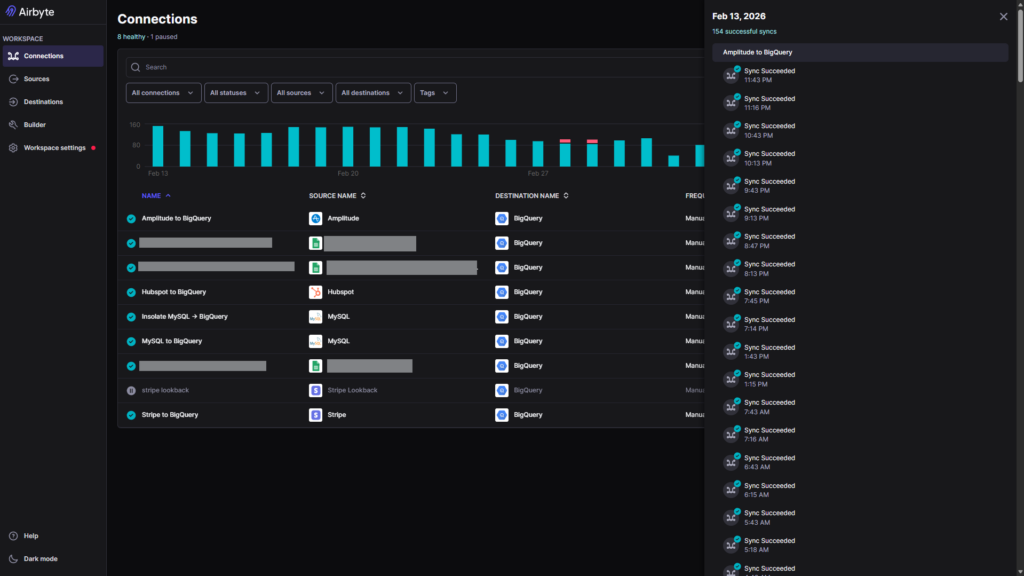

Recently, our data engineering team tackled this exact challenge for a project, successfully architecting a highly scalable, automated data pipeline. By leveraging Airbyte, we synchronized multiple disparate data sources into a single, centralized Data Warehouse, transforming how the business interacts with its data.

Here is a look behind the scenes at how we built it.

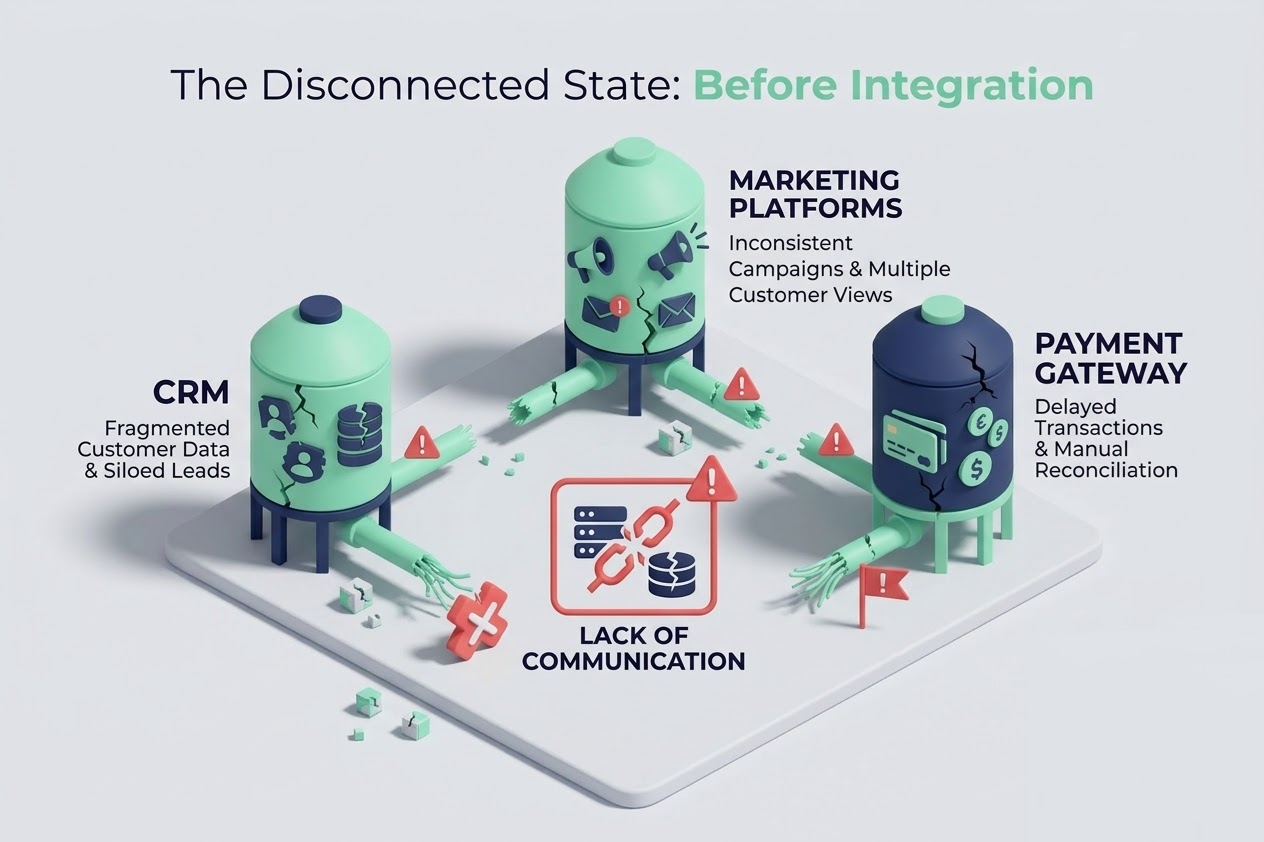

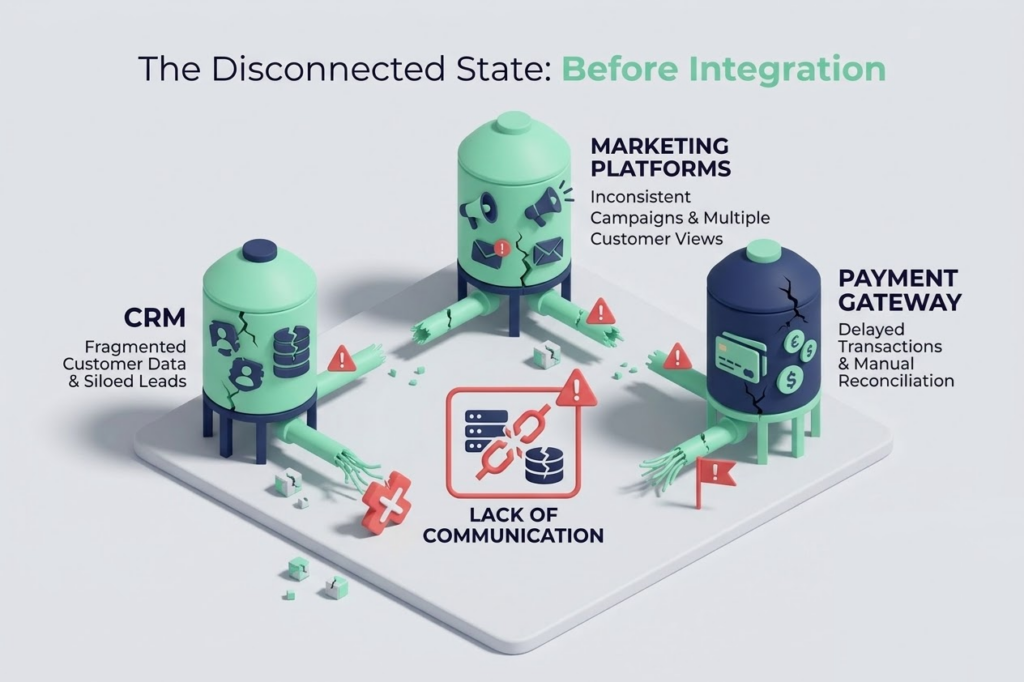

The Challenge: Fragmented Truth

Before this implementation, the data landscape was scattered. Analytics and reporting required manual data extraction, complex Excel merges, and hours of engineering time just to answer basic operational questions.

The primary pain points included:

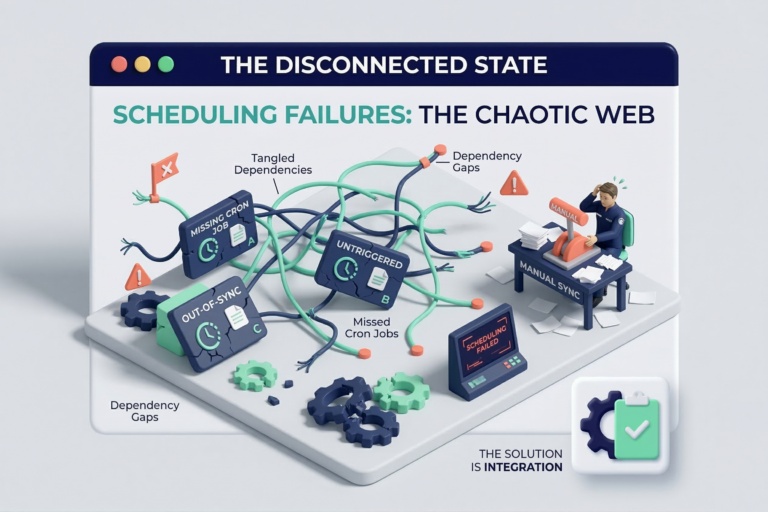

- High Latency: Reports were often days out of date due to the manual effort required to compile them.

- Inconsistent Logic: Different departments pulled data at different times, leading to conflicting metrics.

- Engineering Bottlenecks: Valuable engineering hours were wasted writing and maintaining custom API extraction scripts for every new tool the company adopted.

Our Solution: Modern Data Integration with Airbyte

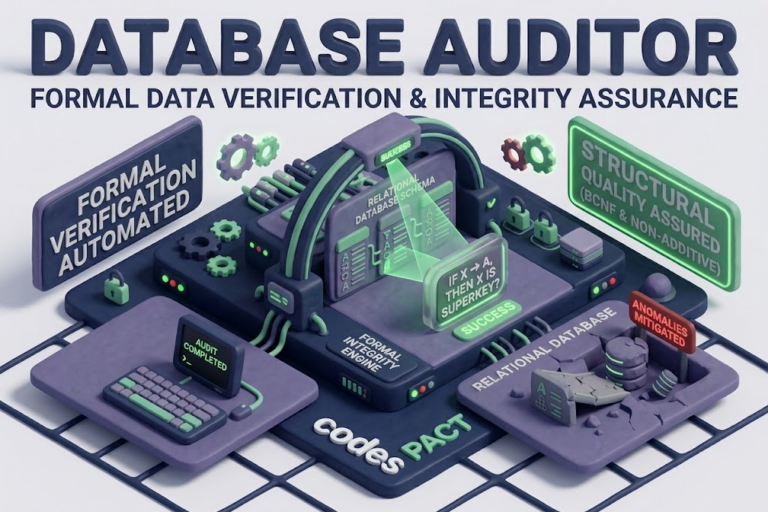

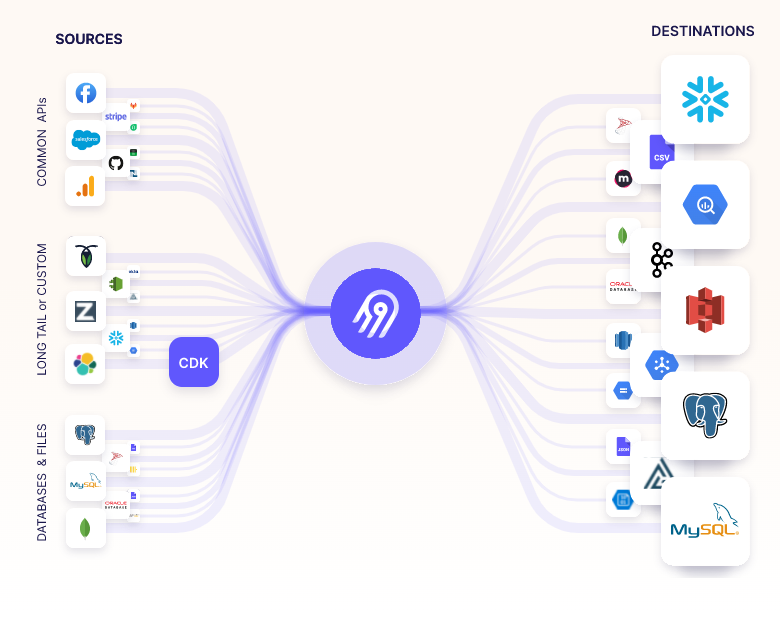

To solve this, we needed a robust ELT (Extract, Load, Transform) strategy. We chose Airbyte as our primary ingestion engine due to its extensive connector ecosystem, open-source flexibility, and reliable CDC (Change Data Capture) capabilities.

Our goal was simple: Automate the extraction of raw data from all third-party services and operational databases, and load it reliably into a scalable Cloud Data Warehouse.

The Architecture

Our team designed a streamlined architecture that prioritized automation and observability.

- The Sources: We configured Airbyte to securely connect to the necessary operational sources. This included pulling live transactional data via Postgres CDC, customer behavior metrics from the CRM, and financial data from payment gateways.

- The Engine: Airbyte handled the orchestration of these syncs. We set up incremental syncs to ensure we were only moving new or updated data, drastically reducing compute costs and pipeline latency.

- The Destination: All raw data was securely loaded into our central Data Warehouse. This became the “Single Source of Truth.”

- The Transformation: Once the raw data landed in the warehouse, downstream transformation tools took over to clean, model, and prepare the data for the BI dashboards.

The Results: Real-Time Intelligence

By implementing this Airbyte-driven pipeline, we replaced brittle, custom-coded integrations with a resilient, standardized infrastructure.

Key project outcomes:

- Zero Maintenance Extractions: With Airbyte managing the API changes and connector updates, we eliminated the engineering overhead of maintaining custom Python extraction scripts.

- Near Real-Time Reporting: Sync schedules were optimized, providing business stakeholders with fresh data every few hours rather than every few weeks.

- Scalability for the Future: When the business adopts a new SaaS tool, we can now integrate its data into the central warehouse in minutes, not sprints.

Looking Forward

Centralizing your data is the foundational first step toward advanced analytics and machine learning. With a reliable, Airbyte-powered data warehouse now in place, the focus can shift from finding the data to actually using it to drive business value.

If your organization is struggling with data silos and manual reporting, our team has the expertise to architect a modern data stack tailored to your needs. Reach out to us today to discuss your next data project.