Information velocity within technical industries is a critical bottleneck. Staying competitive means ingesting, processing, and retaining vast amounts of data from divergent sources—including technical research papers (PDFs), engineering blogs (RSS feeds), and ad-hoc team updates (Telegram). Manual synthesis is too slow.

Our firm was tasked with developing a unified, intelligent system to ingest this information, normalize it, and apply sophisticated AI processing to generate structured knowledge assets (flashcards and summaries) automatically.

We selected n8n as the workflow orchestration engine for this project due to its flexibility in combining diverse APIs, robust conditional logic, and seamless integration with emerging LLM (Large Language Model) stacks.

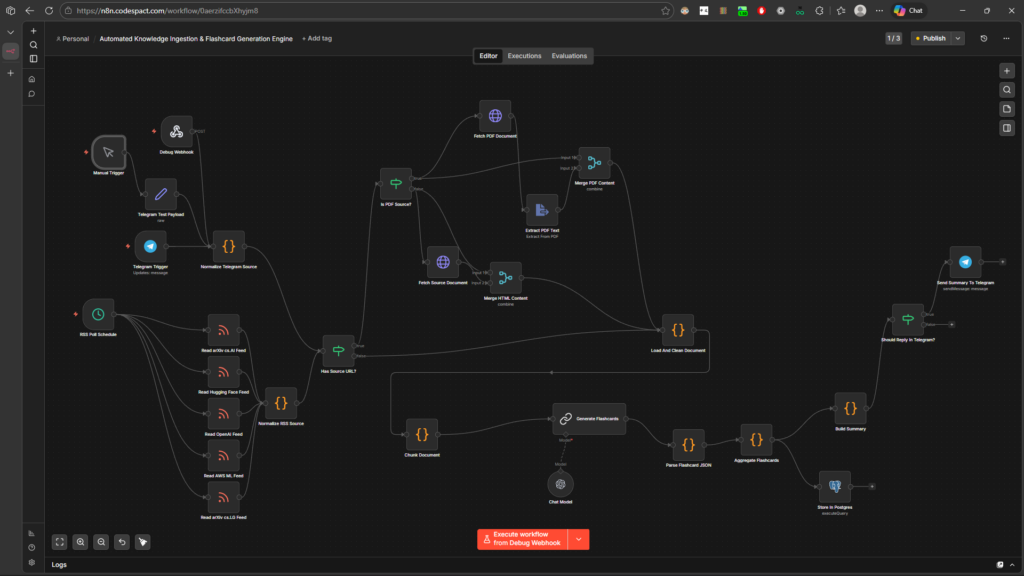

The Architecture: A Unified Ingestion & Synthesis Engine

Below is the complete architectural diagram of the solution we engineered. This complex, multi-layered workflow automates the entire knowledge management lifecycle, from raw data acquisition to structured storage and notification.

This workflow is structured into four main operational phases:

Phase 1: Diversified Ingestion and Trigger Management

A robust pipeline must accept data on different schedules and from different contexts. We implemented multiple entry points:

- Scheduled Technical Feeds (Cron): The workflow automatically pulls updates on a schedule from key technical sources, including Hugging Face Feed, Arxiv CS AI Feed, OpenAI Blog Feed, AWS ML Blog Feed, and Arxiv CS LG Feed.

- Ad-Hoc Team Input (Telegram): Engineering teams can forward relevant articles or snippets directly to a monitored Telegram Bot, immediately triggering the ingestion process.

- Manual & Webhook Triggers: Essential for developer testing, integration testing, and debugging.

Phase 2: Data Normalization and Content Harvesting (ETL)

A significant challenge in this project was data heterogeneity. A Telegram payload is structured differently than an RSS entry, and extracting text from a web article requires a different approach than extracting text from a complex scientific PDF. We implemented an ETL (Extract, Transform, Load) logic layer:

- Normalization Nodes: The raw input from Telegram or RSS feeds passes through normalization nodes to create a standardized data object for the core processing engine.

- Conditional Routing: The workflow intelligently determines the content type (

Has Source URL?->Is PDF Source?). - PDF Harvesting: If the source is a research paper, the workflow utilizes specialized nodes (

Fetch PDF DocumentandExtract PDF Text) to harvest the content, filtering out unnecessary metadata. - HTML/Text Harvesting: For articles and blog posts, it fetches and parses the HTML content (

Fetch Source DocumentandExtract Text Content).

Phase 3: AI-Driven Analysis and Flashcard Generation (RAG Pattern)

The core value proposition of this system is the intelligent synthesis of information. We didn’t just feed raw text into an LLM; we engineered a robust processing chain:

- Load and Clean Document: The extracted text is sanitized to remove noise and formatted for analysis.

- Vector Retrieval (RAG): The sanitized text is fed through a RAG (Retrieval-Augmented Generation) chain. It is chunked (

Chunk Document) and then cross-referenced against a vector store (Fetch Relevant Snippets). This ensures the AI model operates with precise context. - Flashcard Synthesis (Structured Output): A tailored prompt is sent to the AI chat model to synthesize the information specifically into high-quality flashcards (question/answer format). A crucial engineering step was implemented here: forcing the LLM to output valid JSON (

Parse Flashcard JSON), ensuring it can be reliably used in downstream applications. - AI Summary: Simultaneously, a second prompt generates a high-level technical summary of the entire source.

Phase 4: Closing the Loop – Persistance and Notification

The pipeline concludes by persisting the data and providing immediate value to the team:

- Data Aggregation: The synthesized flashcards from the JSON parser are collected into a clean array.

- Postgres Storage: All structured assets (source metadata, original text, flashcards, and summary) are committed to a relational database (

Store in Postgres). - Conditional Telegram Notification: The workflow checks if the trigger was an ad-hoc team submission (

Should Reply in Telegram?). If so, the bot immediately replies with the AI-generated summary and a count of the new flashcards generated, providing instant confirmation and synthesis to the user.

Conclusion and Impact

This showcase piece demonstrates advanced proficiency in integrating disparate systems, handling heterogeneous data at scale, and implementing complex AI workflows beyond simple API calls. By moving from manual content consumption to an automated, RAG-enabled ETL process, we have built a system that turns the velocity of technical information from a challenge into a sustainable competitive advantage.